AI Content Writing Checklist for SEO

Follow these 5 essential principles to create AI-assisted content that ranks and resonates

It's Okay to Use AI for Content

Google has been crystal clear: it's not about how you create content, but whether you're answering user search intent. The focus is on quality, helpfulness, and relevance (not the tools you use). AI can be a powerful ally in your content creation, as long as you follow these proven principles.

1. Inject Your Experience

AI can generate information, but it can't live your life. This is where you gain an unbeatable advantage. Your personal experience, client stories, real-world lessons, and unique insights add the "Experience" in E-E-A-T that AI simply cannot replicate.

"When I helped a SaaS client restructure their pricing page in 2023, we saw a 34% increase in conversions within 60 days. The key wasn't adding more features, it was simplifying the decision-making process by reducing options from five tiers to three."

"Pricing pages are an important part of any website. To optimize your pricing page, consider simplifying your options and making it easier for customers to make decisions. This can lead to increased conversions."

2. Be Precise, Cut the Fluff

Don't chase arbitrary word counts. More words don't equal better content (depth beats length every time). Structure your content to answer questions precisely, especially in your H2s and H3s.

H2: How Long Does It Take to Rank on Google?

Most new websites take 3-6 months to rank for competitive keywords, though low-competition terms can rank within weeks.

Factors include domain authority, content quality, and backlink profile.

H2: Google Ranking Timeline

When you're thinking about SEO, there are many factors to consider. First, we need to understand search engines.

Google is the most popular search engine in the world and has a complex algorithm...

3. Write at an 8th-Grade Reading Level

This isn't about dumbing down your content (it's about accessibility). The majority of online readers prefer clear, straightforward language. Avoid unnecessary jargon and complex phrasing that creates barriers.

"Email marketing helps you build relationships with customers by sending them valuable content directly to their inbox."

"Email marketing facilitates the cultivation of symbiotic customer relationships through the strategic dissemination of value-added digital correspondence to individual electronic mailboxes."

4. Back Up Statements with Data

Numbers and statistics aren't optional (they're essential for credibility). Every claim needs supporting data, and every stat needs a link to the high-quality source where you found it. This builds trust with both Google and your readers.

"According to a 2024 HubSpot study, companies that blog consistently generate 67% more leads per month than those that don't."

"Blogging is one of the most effective ways to generate leads for your business. Many successful companies use blogging as their primary marketing strategy."

5. Add Supporting Visuals

Remember, you're writing for humans, not just search engines. Break up text walls with images, screenshots, diagrams, charts, and illustrations. Visuals should add value and enhance your points (not just serve as decoration).

Explore Our Latest Insights

Stay updated with our recent blog posts.

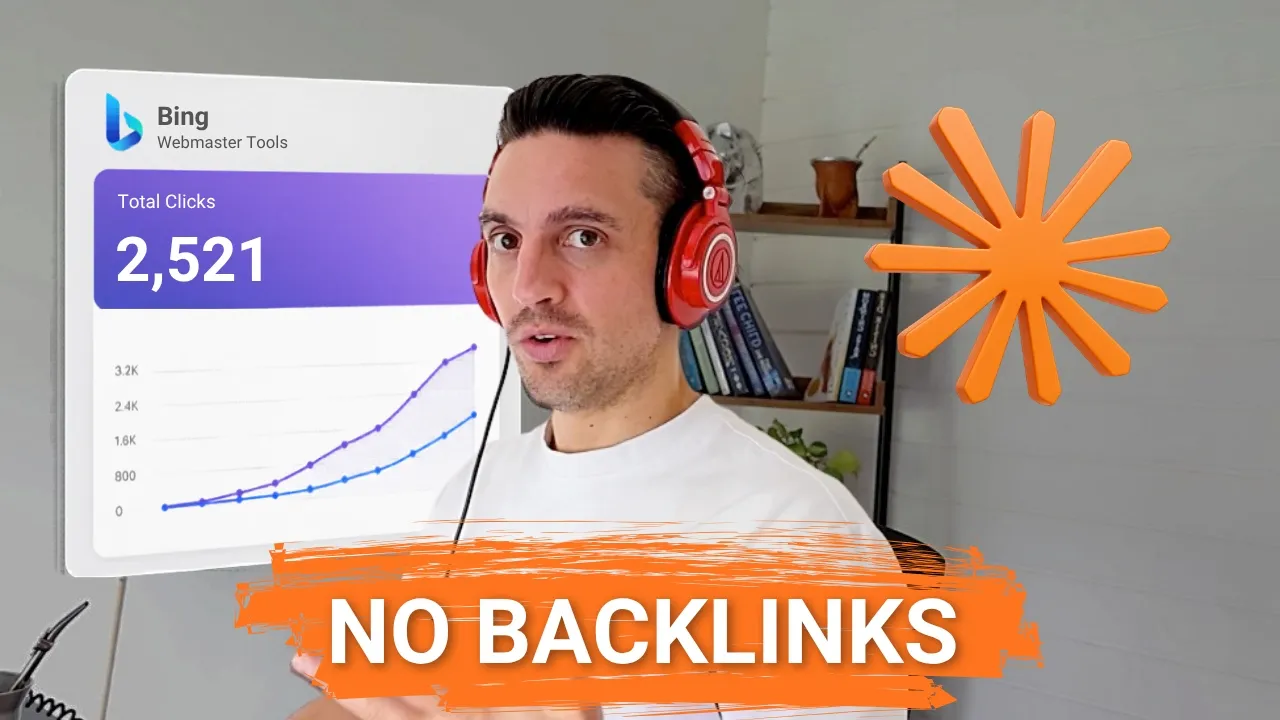

I built a Chilean fuel-price site with Claude Code in a weekend on Astro + Cloudflare. Thirty days later: 2,669 sessions and 2,500+ Bing clicks, traffic from ChatGPT, Perplexity and Copilot, and zero backlinks built. Here is the full workflow, niche to launch.

Can You Really Build and Rank a Website in 30 Minutes With AI?

Yes, the build itself takes about 30 minutes of focused work with Claude Code. Ranking is the patient part, but it happens fast when the fundamentals are in place.

I picked a fictional-sounding niche that was actually huge in Chile: live fuel prices. I bought preciocombustible.cl, opened Claude Code, and let it cook. Thirty days after going live, Google Analytics showed 2,669 sessions, the majority from organic search, plus citations and traffic from ChatGPT, Perplexity and Copilot.

No backlinks. No paid ads. No team. Just a weekend of vibe building on top of solid SEO fundamentals.

How Do You Pick a Niche Worth Building For?

Find a category that gets real search demand but has weak SEO from the incumbents. That gap is your opening.

I noticed fuel prices were chaotic worldwide, so I assumed there would be a country-by-country search habit for live prices. There was. The biggest Chilean competitor was pulling around 24,000 estimated monthly visits and ranking for 689 keywords, but their on-page SEO was rough. That is the dream scenario: clear demand, beatable execution.

A few rules I follow when sniffing out a niche like this:

- Real search volume for the head terms (use any keyword tool to confirm)

- Weak incumbent SEO (title tags, schema, internal linking, page speed)

- A country-code domain (.cl, .ie, .mx) for hyper-local intent

- An API or data source I can pipe into the site to auto-fill pages at scale

If all four boxes are ticked, the build is mostly an execution problem, not a strategy problem.

What Does the Claude Code Workflow Actually Look Like?

I dictate the idea into Otter.ai, paste the transcript into Claude Code, and run Plan Mode + Ultrathink before a single line of code gets written.

Plan Mode forces Claude to think before it builds. Ultrathink dials the reasoning effort up so it actually maps out the architecture, the API calls, the page structure, and the components. I add the competitor URL, the data API I want to use, the domain I bought, and the stack I want (Astro + Cloudflare).

From there, Claude spins up subagents in parallel: one for competitor analysis, one for API exploration, one for keyword research. That parallelism is the unlock. You are not waiting for one agent to finish, you are getting three streams of context at once.

By the time Claude comes back with the plan, the build is essentially de-risked. I just answer a few clarifying questions (open-source map vs. Google Maps, Cloudflare access, etc.) and let it run.

Why Astro + Cloudflare for SEO Sites?

Astro ships zero JavaScript by default, and Cloudflare gives you free global hosting plus a Workers deploy from inside Claude Code. That combination is hard to beat for SEO performance.

Static HTML + edge delivery means your pages load fast, get crawled cleanly, and score well on Core Web Vitals. With Wrangler permissioned inside Claude Code, the agent can push a staging site, attach a custom domain, and ship to production without me touching the Cloudflare dashboard.

Wrangler can do almost everything, except the things that matter most (it cannot purchase or delete a domain), which is exactly the safety boundary you want when you are letting an AI handle deploys.

How Do You Make an AI-Built Homepage Not Look Boring?

Feed Claude a design from a tool that specializes in design. I use Stitch by Google and screenshot the homepage Claude built first.

Then I ask Stitch to redesign it with a clear brief: fun, simple, fuel-price site for Chile. Stitch exports a PNG plus the HTML, and I drop both into the Claude Code folder. Then I tell Claude: take the design in this folder, rebuild the homepage to match it, use the Nano Banana Pro skill for any high-quality images, and add fluid hover animations.

A few minutes later the homepage went from generic to actually inviting. This is the trick most people miss: pair an AI coder with an AI designer. Do not make the coder do both jobs.

How Do You Generate Blog Posts That Match a Single Brand Look?

Tell Claude to write the blog, then make it spawn a side agent to define a permanent image style guide before generating any visuals.

For this site I asked for two posts: why are fuel prices rising in Chile, and where does Chile get its fuel. I told Claude to write roughly 70% of the post using the capsule content technique, link to sources, and include a five-question FAQ with the right schema.

Then I added one line that made everything consistent: launch a sub-agent to set the camera, focal length and style for every image, save it to the project file, and use it on every future image. Now every blog image, every hero, every section graphic looks like it was shot on the same camera in the same lighting. That visual consistency is brand-building on autopilot.

How Do You Hook Up Google Search Console and Analytics?

Two five-minute setups. Both have a Cloudflare shortcut that saves you the DNS pain.

- Add a domain property using your custom domain

- Click Start verification: if Cloudflare and Chrome are both signed into the same account, GSC auto-authorizes through Cloudflare

- Grab your sitemap URL from Claude Code and submit it under Sitemaps

For Google Analytics:

- Create a property, set the country/currency, pick Web as the platform

- Copy the gtag snippet and tell Claude Code to install it site-wide

- Verify with the free Tag Assistant Chrome extension before you celebrate

Pro tip: ask Claude to push the GA changes to production explicitly. The first time I tried this it installed the tag on staging only, and Tag Assistant flagged it. One follow-up prompt and it was live.

How Do You Fix PageSpeed Without Knowing What You Are Doing?

Screenshot the Google PageSpeed Insights report, drop it into Claude Code, and ask it to read the image and fix the issues.

Claude will catch the obvious wins: PNGs that should be WebP, lazy-loading on images below the fold, unused CSS. I literally just say: mobile is loading too slowly, read this screenshot carefully, make a plan, and convert any PNG to WebP.

A few minutes later your mobile score jumps. It is not magic, it is just that you have an engineer who never gets bored of fixing image formats. Steven, one of our community members, built 800+ location pages this way and now pulls 105 appointments per month with pages indexing in under an hour.

What Were the Actual 30-Day Results?

From launch on April 5 to May 11, the site pulled 2,669 sessions, 101 Google Search Console clicks, 2,500+ Bing clicks, and citations across ChatGPT, Perplexity and Copilot. Zero backlinks.

Here is the breakdown that surprised me most:

- Organic search drove the majority of sessions across Google, Bing and Yahoo

- Bing was the biggest traffic source, which matters because AI search traffic converts 4.4x better than traditional organic, and Bing powers Copilot plus a lot of ChatGPT browsing

- Six days in the site was already ranking for 85+ queries

- By week 4 Bing Webmaster Tools AI Performance dashboard was showing real citations inside ChatGPT

The lesson: if you are ignoring Bing Webmaster Tools, you are leaving a huge slice of AI traffic on the table. For some industries, especially desktop-heavy and work-laptop niches, Bing will out-perform Google for months.

Frequently Asked Questions

Do AI-built websites actually rank on Google?

Yes, if the content is genuinely useful and the technical SEO is solid. Google does not penalize AI-generated content as a category, it penalizes thin, unhelpful content. The ranking factors that matter are quality, relevance, structure, and Core Web Vitals, regardless of who or what wrote the page.

Can I really skip backlinks?

For low-competition local niches with weak incumbents, yes, at least for the first 30-90 days. Once you want to compete in saturated head terms, backlinks come back into play, but you can build a meaningful audience and revenue stream long before that point.

Why use Astro instead of WordPress?

Astro outputs static HTML with zero JavaScript by default, so it is faster and cleaner for search engines to crawl. WordPress can get there with the right plugins, but Astro is faster out of the box and pairs natively with Claude Code and Cloudflare for deploys.

How do I get cited by ChatGPT and Perplexity?

Structure your content so each section answers a specific question in the first 40-60 words, then expands. That is the capsule content method, and it is how studies on 8,000+ AI citations found pages get picked up by generative search.

Do I need to know how to code?

No. The entire build in this video was done in plain English. The skill you need is being clear about what you want and verifying that what Claude built actually matches your spec.

Ready to Build Sites That Rank Themselves?

If you want the exact workflow I use, including the capsule content method, the Claude Code SEO setup, and the new 7-day SEO action plan we just released, join the AI Ranking community. Membership also includes unlimited access to Datawise, the SEO tool you saw at the start of the video.

If you have questions about anything in this build, drop them in the YouTube comments. I read every one.

Resources

- Watch the full video on YouTube

- How to Make a Website with Claude & Astro (full tutorial)

- Capsule Content Method

- How to Do SEO for SearchGPT

- SEO in the Age of AI

- Turn Claude 4 Into Your Personal SEO Assistant

- Ahrefs: AI Search Traffic Converts 4.4x Better

- Search Engine Land: Insights From 8,000 AI Citations

- AI Ranking Community

Watch Me Design, Launch and Rank a Website in 30 Min (With Claude)

Four Claude Code systems run my entire SEO workflow under one roof: keyword research, a content writer, a site health audit, and a content refresher. They feed each other like an SEO team would, ship blog posts that follow the capsule content method, and run on a Monday 9am cron. One repo, four prompts, free organic traffic.

Why bother building an SEO system inside Claude Code?

Because most SEO tools force you to bounce between five tabs to ship one blog post, and the boring tasks (the ones that actually move rankings) are the ones you skip. I built four systems that connect under one roof and feed each other like an actual SEO team would. The result on one site was 14.4M impressions and 90,000 organic clicks. Two newer sites started pulling organic traffic the week they launched.

No ads, no agency, no five-tool stack. Just Claude Code running the work.

And this matters more in 2026 than ever, because AI search engines now convert roughly five times better than traditional organic traffic, but only if your content is structured to be cited. These systems are what get you there without burning a weekend.

What are the 4 Claude Code SEO systems?

The four systems are keyword research, a content writer, an on-site audit, and a content refresher. Each one is a skill Claude Code can run on command, and each one outputs into a shared dashboard so the next system knows what the previous one did.

- System 1 — Keyword research: Builds a keyword bank + fan-out cluster + content queue. Run once a month.

- System 2 — Content writer: Drafts ranking-ready blog posts using the capsule method. Run weekly.

- System 3 — On-site audit: Pulls a full health report via DataForSEO. Run fortnightly.

- System 4 — Content refresher: Flags decaying or de-indexed posts to rewrite. Run monthly.

The trick is they share state. The keyword researcher knows what's already been covered, so the content writer can't cannibalise itself. The audit knows which pages exist. The refresher knows which ones are dying.

What do you need before you start?

You need a project folder, Claude Code, and a DataForSEO key. That's it.

- A project folder on your local machine with your business info inside (services, locations, brand voice, USPs)

- Claude Code installed (desktop app or CLI, which I prefer for SEO automations)

- A free DataForSEO account (this link gives you $5 free credit instead of $1, pay-as-you-go after that)

- The GitHub repo with all four systems pre-built: github.com/NicoSKOOL/the-four-systems

Hand Claude Code the repo link and tell it to install the systems for this business. Eight to ten minutes later, all four skills are wired up and pulling your business context. Auto-mode helps here so it stops asking permission every 30 seconds.

If you've never connected Claude Code to MCP servers before, watch my SEO Command Center setup video first, then come back.

How does System 1 (keyword research) work?

You type a service or topic, and Claude Code runs the keyword research skill, builds a fan-out cluster, and saves it to a dashboard.

In my example I ran it for “therapeutic gardening”. A couple of minutes later I had 31 keywords, a CSV file, and a live HTML dashboard. The dashboard has two things that matter:

- A keyword bank of every keyword in the cluster, with status flags so Claude knows which ones it's already targeted (this is what stops content cannibalisation)

- A fan-out cluster of supporting keywords that should appear as H2s or H3s inside the eventual blog post

So by the time System 1 is done, System 2 already knows exactly what to write and which headings to use. You only need to run keyword research once a month, or whenever you run out of content.

This is the same fan-out logic Google's AI uses to decide what to cite, which is why fan-out queries are the unlock for ChatGPT citations too.

How does System 2 (the content writer) write ranking blog posts?

You tell Claude Code “write the next blog post”. It pulls from the keyword bank, drafts a post using the capsule content method, and outputs an MD file or publishes directly to your site.

Specifically, the content writer does six things automatically:

- Injects your experience from the business files (first-person stories, real numbers, anything that smells like E-E-A-T)

- Targets the primary keyword in the title and an H1, and the fan-out keywords in H2s and H3s

- Writes ~70% in the capsule method (H2s phrased as questions, answered in the first one or two sentences)

- Cites high-trust sources like government domains, official health bodies, primary research

- Internally links across the site because it reads your sitemap

- Adds a TL;DR block at the top, which is now best practice for getting cited by AI search

If your site is built on Astro, the post publishes itself to the live site without you ever opening a CMS. If you're on WordPress, you get a clean MD file to paste in, and the WordPress REST API can automate that part too.

Community win: Inside the AI Ranking community, Steven used a version of this exact workflow to index more than 800 local service pages, which generated 105 booked appointments in a single month from organic traffic alone. No ads.

Don't worry about making posts longer. Worry about making them better. The content writer was tuned for citation-readiness, not word count.

How does System 3 (the on-site audit) work?

You run “audit the site”, Claude Code calls DataForSEO, and you get a full health report inside the dashboard with prioritised fixes.

On the test site, it returned an on-page score of 97/100 and an SEO score of 99/100, plus a list of broken links and slow pages to fix. Total DataForSEO cost: about 48 cents. With the $5 free credit, you can run this 10 times before paying anything.

The audit also tells you exactly which fixes to do first. If your site is on Astro and Claude Code can edit it directly, you can tell Claude to fix them for you. If you're on WordPress, you do the fixes manually but at least you know what to fix.

Run this once a fortnight, definitely once a month. Most people skip on-page audits because the data is overwhelming. Claude's job is to do the distilling for you.

How does System 4 (the content refresher) work and why does nobody run it?

The content refresher reads your Google Search Console data, finds blog posts that are decaying or de-indexed, and tells you exactly which ones to rewrite. Almost nobody runs this, and it's the highest-ROI system of the four.

Here's why it matters: only around 60% of the blog posts you publish stay indexed. Google has been getting much stricter about what it keeps in its index, and “crawled, currently not indexed” is Google's passive-aggressive way of saying it read your content and didn't think it was worth keeping.

When you run run refresh recommender, Claude:

- Pulls your Search Console coverage data

- Flags pages that are decaying in rank or dropped from the index

- Tells you whether to rewrite, merge, or kill each one

- Optionally rewrites the page using System 2 so the new draft inherits your business context and the capsule method

This is the half of the job most people skip. Generating new content is only 50% of SEO. The other 50% is keeping the content you already have alive.

How do you put all four systems on a schedule?

You tell Claude Code to turn the workflow into a routine, and it sets it as a local automation.

The simplest version is one sentence:

“Set this workflow to run every Monday at 9:00 AM.”

Claude Code registers it as a routine. If you're using the desktop app, the catch is that your computer has to be on at run time. If you're using the CLI, you can run it as a cron job in headless mode, which is what I do across multiple sites.

If you want this fully cloud-based, you'd need to move the MCP connections (Search Console, DataForSEO) to the cloud too, which is more setup than most people want. Local cron is the 80/20.

And yes, before you ask, this all works in Codex with GPT-5.5 too. Same architecture, different runtime.

Frequently Asked Questions

Do I have to use Astro for this to work?

No. Astro just lets Claude publish posts directly without touching a CMS. WordPress, Webflow, Framer, all work, you just plug into their APIs or paste the MD file manually.

How much does DataForSEO cost to run all four systems?

The on-site audit was 48 cents per run on a small site. Keyword research is a few cents per cluster. Even if you ran the full stack weekly, you'd burn through the free $5 credit in a couple of months.

Can I run this in Codex instead of Claude Code?

Yes. I have a full walkthrough on running the same workflow with Codex and GPT-5.5. The systems are agnostic, the runtime isn't.

What's the capsule content method?

A blog structure where every H2 is a question and the first sentence answers it directly, so AI search engines can lift the answer cleanly. Full breakdown here.

Will the content writer trigger an AI penalty?

No. Google has publicly said AI content is fine when it's helpful. The reason this workflow doesn't trip penalties is the business context injection, the source citations, and the capsule structure. That's what “helpful” looks like.

Want me to set this up for you?

If you'd rather skip the wiring step and learn this inside a community of people running it in production, AI Ranking is where the full workflow lives. Live SEO audits every Thursday, weekly tutorials on systems like these, and a private repo of the agents, skills, and prompts I use across every site.

Resources

I Built 4 Claude Code Systems That Run My Entire SEO Workflow (14.4M Impressions)

Connect Codex to Google Search Console and DataForSEO, and GPT-5.5 can run five SEO tasks for you on a schedule: a site health report, a click-through-rate rewrite agent, a content-idea agent, an internal-link agent, and a self-improving content engine. Two free API connections, five prompts, and your SEO does itself.

Why automate SEO with Codex in the first place?

Because the boring SEO tasks (the ones you actually skip) are the ones that move rankings. Pulling Search Console data, rewriting weak titles, finding pages with high impressions and zero clicks, hunting for content gaps. None of that is hard, it's just tedious. Codex with GPT-5.5 does it on a schedule and emails you the results.

And it matters more than ever in 2026. Traffic from AI search engines converts roughly 5x better than traditional organic, but only if your pages are structured to be cited. The agents below are what get you there without burning a weekend on it.

A quick aside before we dive in

I know I seem like I keep swapping from Claude to ChatGPT. Trust me, I don't want to give you shiny object syndrome, and I'm not telling you to jump ship to another AI for your SEO.

But if you're already using something in the OpenAI world, then you might want to know about this. Codex has a few things going for it right now (local automations, scheduling, GPT-5.5's tool use) that genuinely fit this workflow.

I'm here to help, I swear. Use whatever tool actually moves the needle for you.

What do you need before you start?

Two free things and one paid tool you probably already have.

- A Google Cloud project with the Search Console API enabled (free, takes 90 seconds)

- A free DataForSEO account (this link gives you $5 credit instead of $1, and it's pay-as-you-go so you don't get charged if you're idle)

- Codex running locally with GPT-5.5 selected (Codex high works too, but 5.5 is the sweet spot)

If you already have Ahrefs, you can skip DataForSEO and connect Ahrefs directly to Codex. Same outcome.

How do you connect Codex to Google Search Console?

You give Codex one OAuth credentials JSON file and it handles the rest.

- Open Google Cloud Console, create a project, then go to APIs & Services.

- Search for Google Search Console API and enable it.

- Go to Credentials, create an OAuth client ID, choose Desktop app, name it "Codex", and download the JSON file.

- In Codex, start a project in your site's folder and paste this prompt:

"Connect to the Google Search Console API using this OAuth credentials file. Authenticate me in the browser, save the token locally, then use the Search Console API to read my site's performance data."

A browser window pops up. Log in with the Google account that owns the Search Console property (this part trips people up: it has to be the right account). Click allow, and Codex saves the token. From now on, every chat in this project has live Search Console access.

How do you connect DataForSEO to Codex?

Grab two credentials and install the MCP server.

In your DataForSEO dashboard, go to API Access and copy your login email and password. If you've never logged in, the password is shown once at the top, save it. If you've logged in before, request a password reset and check your email.

Then ask Codex to install the DataForSEO MCP server with those credentials. Test it by asking Codex to pull ranked keywords for your domain. If you see a clean list back, you're good.

That's the whole setup. Two API connections, ten minutes, and Codex now has access to your Search Console performance and a full SEO dataset (keyword volumes, SERP data, Lighthouse scores, competitor intelligence).

What are the 5 SEO agents you can build?

All five live as prompts in the Google Doc linked in the video description. Drop them into Codex one by one.

1. Site health report agent

A weekly on-page audit. Codex pulls Lighthouse data, checks for broken links, scores on-page SEO, and flags pages with high impressions but zero clicks. The first run on my Astro site came back with a 97 on-page score, no broken links, and a list of "pages to investigate" including a blog with average position 1.6 (basically ranking #1) but zero clicks. That's a title-tag problem, and Codex tells you exactly which one.

2. Click-through rewrite agent

Most people obsess over Google AI Overviews and forget that a strong title tag and meta description still drives the bulk of clicks. This agent finds pages with high impressions and weak click-through rates, runs the keyword through DataForSEO, analyzes the titles and meta descriptions of whoever ranks #1, and writes you better versions. Same impressions, more clicks, more traffic. It's the laziest win in SEO.

3. Content idea agent

Instead of generic keyword research, this agent reads your Search Console data, understands what your site is already winning at, then suggests new keywords that fit your business and have realistic difficulty. It outputs target keywords, suggested URLs, and the reason each one fits. This is the one that saves the most time, because thinking up topics is the bottleneck.

4. Internal link agent

The fourth prompt scans your site for internal linking opportunities. Codex finds pages on related topics that aren't linked to each other, then proposes the exact anchor text and source paragraph. It's the kind of work no one does manually, but it compounds rankings.

5. Self-improving content agent

The fifth one publishes blog posts. With your custom knowledge files loaded into the project, Codex can run on a schedule (Monday, Wednesday, whatever you set), pull a target keyword from agent #3, write the post, and save it to your repo. If you're on Astro, this is genuinely close to a self-writing website. WordPress users can do the same with a small wrapper.

How do you turn these agents into automations?

Once an agent's output looks right, ask Codex:

"Set this as an automation to run every Tuesday at 9:00 AM."

Codex registers it as a local automation. The catch: because it runs locally, your computer has to be on. I run mine Monday to Friday, 9 to 5, which matches when my computer is on anyway. If you want this fully cloud-based, it's possible but takes more setup.

You can also tell Codex to email you the output every run, which is what makes this actually useful. SEO reports you don't read are worse than no reports.

Frequently Asked Questions

Do I need to pay for Codex?

You need a ChatGPT subscription that includes Codex (Plus or higher). DataForSEO is pay-as-you-go and the free $5 credit covers a lot of testing.

Why GPT-5.5 specifically?

Codex high works, but 5.5 is consistently better at multi-step agent work and following long prompts without losing the thread. Use 5.5 unless you have a reason not to.

Can I use Claude or Gemini instead?

Yes, but Codex's local automations and tool-use are smoother right now. I find OpenAI's usage limits more workable than Claude's for this kind of repetitive agent work.

Will this work on WordPress?

Yes. The reporting and idea agents work on any site. The self-publishing agent needs a way to push to your CMS, which is straightforward with the WordPress REST API.

Is this the same as setting up an MCP server in Claude?

Conceptually yes. DataForSEO is an MCP server, and you're plugging it into Codex the same way you'd plug it into Claude. The difference is Codex has scheduled local automations baked in.

Want help setting this up?

If you're new to AI search SEO, the agents above will move faster with the right foundation underneath them. Inside AI Ranking, I teach the full workflow: from site structure to capsule content to getting cited by AI search engines. Live SEO audits every Thursday, weekly tutorials, and a community of people running this stuff in production.

Resources

5 SEO Tasks Codex Can Automate With GPT-5.5

Stay Updated with Our Insights

Subscribe to our newsletter for the latest tips and trends in AI-powered SEO.